The phrase “Social Media Saga SilkTest” has rapidly gained traction across tech forums, developer communities, and mainstream social platforms in 2026. What started as a niche discussion among software testers has evolved into a broader online conversation about automation, digital transparency, and the reliability of AI-powered systems. As hashtags trend and opinion threads multiply, many users are wondering what SilkTest really is—and why it has become such a hot topic in today’s digital ecosystem.

TL;DR: Social Media Saga SilkTest refers to the growing online debate around the evolving role of SilkTest, an automated testing tool, in today’s AI-driven and socially connected platforms. In 2026, discussions center on transparency, automation ethics, digital trust, and the role of testing in influencer apps and social media ecosystems. The “saga” reflects user concerns, developer innovation, and the collision between automation and public perception. Understanding this topic helps users stay informed about how digital systems are validated behind the scenes.

What Is SilkTest and Why Is It Trending?

SilkTest itself is not new. Originally developed as an automated testing tool for enterprises, it has long been used to validate software performance, ensure user interface functionality, and detect bugs before public release. However, in 2026, SilkTest has re-entered the public conversation because of its expanded integration into social media platforms, AI content moderation systems, and influencer-driven commerce tools.

The “Social Media Saga” refers less to the software itself and more to the online discussions surrounding how automated testing tools like SilkTest are shaping user experiences in visible and invisible ways.

Key reasons SilkTest is trending include:

- Increased AI-generated content verification

- Automation in social commerce testing

- Concerns about algorithm transparency

- Public disputes over digital trust and platform reliability

In simple terms, people are no longer just interested in the apps they use—they’re curious about how those apps are tested, validated, and maintained behind the scenes.

The 2026 Context: Why the Conversation Escalated

Several developments in 2026 heightened attention around SilkTest and similar automation platforms:

- AI regulation frameworks introduced stricter auditing standards for tech companies.

- Influencer platform glitches caused revenue disruptions, triggering public outcry.

- Data transparency movements pressured companies to reveal more about internal testing processes.

When large-scale social media outages occurred earlier this year, users demanded accountability. In response, developers referenced automated testing systems—including SilkTest—as part of their infrastructure. That acknowledgment unintentionally pulled an enterprise-level tool into mainstream spotlight.

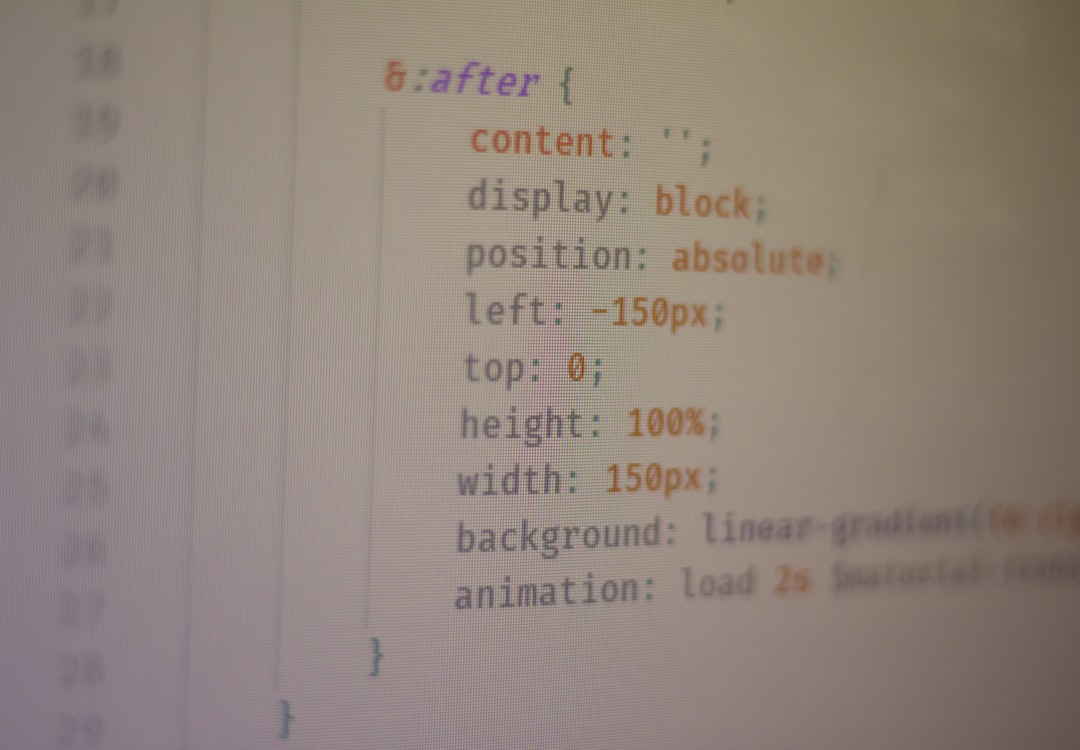

The visual of automated dashboards analyzing social engagement metrics became symbolic of the broader debate: Are these systems enhancing digital experiences, or quietly shaping them in ways users don’t fully understand?

How SilkTest Fits into Social Media Ecosystems

At its core, SilkTest performs automated functional and regression testing. But in today’s interconnected platforms, its role has expanded significantly. Modern applications rely on:

- Cross-device performance testing (mobile, tablet, desktop, AR devices)

- Automated user journey simulations

- Real-time feature validation during live updates

- Continuous deployment testing pipelines

In social media environments, this translates to testing:

- Content publishing flows

- Payment integrations for creators

- Ad delivery mechanisms

- Moderation AI responses

- Recommendation engine interfaces

Without automated tools like SilkTest, frequent updates and algorithm adjustments could easily break core platform functions. Yet the more platforms rely on automation, the more questions arise about oversight and accountability.

Why Users Are Suddenly Paying Attention

For years, backend testing tools were invisible to everyday users. So why has interest spiked?

The shift can be traced to three main trends:

1. Creator Economy Dependence

Influencers and small businesses now rely heavily on automated platforms for income. A front-end glitch caused by insufficient testing doesn’t just inconvenience users—it can suspend payment flows or hide monetized content.

2. Algorithm Transparency Debates

When engagement metrics fluctuate unexpectedly, users often blame platform bias. But sometimes, these changes stem from system updates validated by testing frameworks. SilkTest is part of ensuring those updates function technically—even if their outcomes remain controversial.

3. AI Moderation Accuracy

As AI moderation systems flag or remove content, the testing of those systems becomes critical. Developers use automated suites to simulate edge cases, but critics argue that simulations can’t capture human nuance.

This tension has fueled online narratives questioning how much automation should influence digital speech spaces.

The “Saga” Explained: Controversy or Misunderstanding?

The word “saga” suggests drama—but much of the debate stems from confusion about what SilkTest actually does. It does not:

- Control platform algorithms

- Decide which content goes viral

- Directly moderate user posts

Instead, it ensures that software systems function according to developer specifications. However, when those specifications themselves are controversial, the tools validating them become part of the narrative.

In online discussions, two primary viewpoints dominate:

- Automation Advocates: Argue that scalable testing improves reliability, reduces outages, and enhances user experience.

- Digital Skeptics: Question whether heavy automation distances companies from ethical responsibility.

Neither side is entirely wrong. Automation increases efficiency, but public trust depends on human governance layered over automated systems.

Automation, AI, and Ethical Oversight

In 2026, conversations about SilkTest intersect deeply with AI ethics policies. Many governments now require:

- Transparency reports for algorithm updates

- Independent testing audits

- Accessibility compliance certifications

- Bias mitigation documentation

Automated testing tools assist in meeting these standards. For example, SilkTest can simulate thousands of user scenarios to detect interface inconsistencies or workflow breakdowns.

Yet a common misconception is that automated testing guarantees fairness. In truth, it guarantees technical consistency—not ethical alignment. Ethical decisions still require human input, regulatory oversight, and interdisciplinary review.

What This Means for Everyday Social Media Users

Even if you never directly interact with SilkTest, its presence influences your digital experience. You benefit from:

- Fewer app crashes

- Smoother updates

- Stable payment processing

- Improved accessibility features

But you may also notice:

- Rapid rollout of new features

- Subtle interface changes

- Algorithm adjustments that alter engagement patterns

These shifts highlight the complex dance between experimentation and stability that platforms must manage.

The Developer Perspective

For engineering teams, the inclusion of SilkTest or similar automation tools is less about controversy and more about survival. Modern applications update multiple times per week—sometimes multiple times per day.

Manual testing simply cannot keep pace with:

- Real-time feature deployment

- Multi-device compatibility demands

- API integration complexity

- Security patch urgency

The viral social media narratives surrounding SilkTest may seem exaggerated to developers who view it as standard infrastructure. However, the growing public curiosity about backend processes signals a broader cultural shift toward digital literacy.

Separating Myth from Reality

As online threads multiply, it’s helpful to clarify common myths:

- Myth: SilkTest manipulates user feeds.

Reality: It validates that feed features operate as programmed. - Myth: Automated testing replaces all human oversight.

Reality: Most enterprises combine automation and manual review. - Myth: Testing tools collect personal user data for analysis.

Reality: Tests typically simulate data scenarios in controlled environments.

The line between tooling and policy can blur in social media discourse, but understanding their distinction is critical.

The Broader Implication: A More Curious Internet

Perhaps the most interesting takeaway from the Social Media Saga SilkTest discussion isn’t about the tool itself. It’s about evolving user awareness. In 2026, people care more deeply about:

- How digital systems are built

- Who audits algorithmic changes

- What safeguards exist before updates go live

- How errors are prevented and corrected

This curiosity reflects a digital culture maturing beyond surface-level user experience into structural understanding.

Final Thoughts: Why It Matters

The Social Media Saga surrounding SilkTest illustrates a defining reality of 2026: backend technology is no longer invisible. As automation and AI reshape platforms, users are demanding transparency not only in policies but in infrastructure.

SilkTest remains fundamentally a technical solution—an automation tool ensuring software reliability. Yet its sudden visibility in social discourse reveals something bigger: a growing insistence on accountability in a hyper-connected world.

Whether you view automated testing as a silent guardian of digital stability or as part of a larger automation wave that requires careful oversight, one thing is clear—conversations about tools like SilkTest are unlikely to fade anytime soon.

In an era where trust in technology can shift with a trending hashtag, understanding the systems behind the screens is no longer optional. It’s becoming part of being an informed digital citizen.